FAIR Testing Interoperability Framework

Assess FAIRness consistently across any tool

Many tools already test whether research data is Findable, Accessible, Interoperable, and Reusable. The FAIR Commons ensure they all describe, run, and report assessments the same way.

Today: Incomparable results

30+ FAIR assessment tools exist, but each defines tests and reports results differently. A "pass" in one tool may not mean the same in another.

With FAIR-IF: One shared vocabulary

A shared reference model and vocabulary for every part of the assessment process, from principles down to scores. Built on W3C standards (DCAT, DQV, PROV).

Seven components across three levels

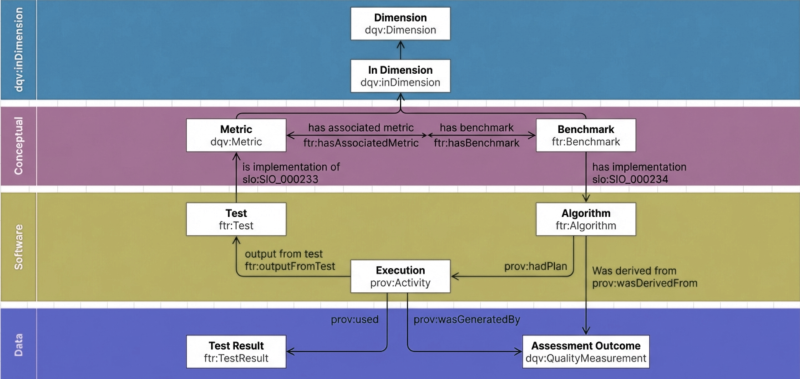

The FAIR-IF Reference Model organises everything needed for a FAIR assessment into three levels: the conceptual definitions, the software that tests them, and the data that comes out. Built on W3C Data Quality Vocabulary (DQV), DCAT v3, and PROV.

Conceptual

Dimensions

FAIR Principles (e.g. F1, R1.1)

Metrics

What a test must check

Benchmarks

How a community defines "FAIR"

Software

Tests

Code that checks and outputs pass / fail

Scoring Algorithms

Code that applies a Benchmark

Data

Test Results

pass / fail / indeterminate + provenance

Benchmark Scores

Final assessment + actionable guidance

The FAIR Testing Reference Model

From "Model" to "Ecosystem"

FTR Vocabulary

The FAIR Testing Resource vocabulary extends W3C DCAT, DQV, and PROV to describe tests, results, metrics, benchmarks, and scores in one consistent ontology.

W3C DCAT W3C DQV W3C PROV ShEx / SHACL

Registries and Catalogues

Communities publish their FAIR definitions and tools register their implementations, making everything discoverable and reusable.

FAIRassist (Metrics & Benchmarks)

Test Catalogue (FAIR Data Point)

Scoring Algorithm Catalogue

What this enables

Practical applications

Community-specific FAIRness

Comparable Assessments

Different tools running the same Benchmark produce comparable results. Move between FAIR Champion, FOOPS!, or any FAIR-IF compatible tool and get consistent outputs.

Actionable Guidance

Step by Step

How to use the FAIR-IF

Define what FAIR means for your community

Create Metrics (what each test must check) and group them into Benchmarks (your community's definition of FAIR). Register them in the FAIRassist registry so they're discoverable and citable with DOIs.

Build or reuse Tests

Write code that implements a Metric, or reuse existing shared tests from FAIR Champion or FOOPS!. Register your test in the Test Catalogue (FAIR Data Point) so other tools can find and run it.

Run assessments via the standard API

Send a resource identifier to any FAIR-IF compatible tool. The API has two types of calls: GET to discover available tests and benchmarks, POST to trigger an assessment and get results.

Get standardised results

Every tool returns results in the same format: the FAIR Testing Resource Vocabulary (FTR). Each result is pass, fail, or indeterminate, with full provenance metadata about how the test was run.

Score and guide

A Scoring Algorithm takes the test results and your Benchmark, then produces a final Benchmark Score, a quantitative assessment with actionable guidance on how to improve.